Table of contents

Best AI Red Teaming Providers: Top 10 Vendors in 2026

What are AI red teaming providers?

As organizations build and deploy AI systems, from customer-facing chatbots to decision engines behind the scenes, the question of trust keeps getting louder. Can these systems be manipulated? Will they behave under pressure? What happens when they don’t?

That’s where AI red teaming providers come in.

These providers specialize in stress-testing AI models, pipelines, and deployments using adversarial thinking. Some offer software platforms. Others offer services led by experienced red teamers. What they share is a mission: expose failure conditions before they show up in production — often working hand-in-hand with complementary practices like AI penetration testing to evaluate deeper model and infrastructure risks.

AI red teaming can help organizations:

- Identify vulnerabilities in language models, vision systems, or other AI components

- Simulate realistic adversarial behavior and abuse cases

- Test resilience to prompt injection, jailbreaks, and data leakage

- Assess model behavior under ambiguous or manipulated input

- Map weaknesses that could impact compliance, safety, or customer trust

Whether you’re adopting AI for the first time or maturing an existing program, it helps to know both what kind of provider fits your needs…and where to start looking.

Editor’s note: Updated information about AI red teaming providers to reflect features and capabilities in 2026.

This article is part of a series of articles about AI red teaming.

Two paths to red teaming: Platforms vs. people

AI red teaming providers generally fall into two camps. Some build tools you can run yourself. Others offer services delivered by expert practitioners. Both have a role to play. What matters is what kind of testing you need and how much support your team wants.

Automated tools: Fast, scalable, and repeatable

These platforms are designed to run red team-style tests automatically or semi-automatically. They’re good for repeatable testing, integrating into CI/CD workflows, and scaling up attack coverage.

- Often include prebuilt test cases and reporting dashboards

- Useful for ongoing validation and regression testing

- May support integration with model APIs, pipelines, or developer environments

Service-based providers: Custom, context-aware, and human-led

These are human-led teams (often offensive security pros or AI specialists) who conduct targeted assessments. They’re valuable for organizations with novel AI use cases or unclear risk exposure.

- Provide tailored, context-aware testing approaches

- Often uncover subtle or complex failures that automation may miss

- Can include regulatory insight, stakeholder reporting, and remediation planning

Some companies combine both approaches, but most lean one way or the other. Knowing the difference helps you avoid shopping for a platform when what you really need is expertise, or vice versa. If you’re exploring the technology side of this space, see our guide to AI red teaming tools for leading platforms and frameworks.

Leading AI red teaming providers: Automated tools

There’s a growing set of platforms built specifically to simulate adversarial attacks against AI systems. These tools help teams run red team-style tests more consistently, often as part of a broader AI risk management program. For a wider view of the market—including both vendors and consulting firms—see our overview of AI red teaming companies.

Here are the best platforms in the space:

1: Mend.io (Mend AI red teaming)

Mend.io is purpose-built for AI powered applications. Their solution to secure AI applications is Mend AI which includes an automated red teaming solution specifically designed for conversational AI applications, including chatbots and AI agents. It provides a robust testing framework that includes 22 pre-defined tests to simulate common and critical attack scenarios such as prompt injections, data leakage, and hallucinations. Beyond these built-in capabilities, the platform also empowers users with the flexibility to define and implement customized testing scenarios, ensuring comprehensive coverage for unique AI deployments.

This solution aims to deliver comprehensive risk coverage, offering detailed insights and actionable remediation strategies to enhance AI system security. By integrating seamlessly into CI/CD pipelines and developer workflows, Mend AI enables continuous security assessments and provides real-time feedback. This allows software development and security teams to catch vulnerabilities early, maintain a strong security posture as their AI systems evolve, and ensure compliance with critical AI security frameworks like NIST AI RMF and OWASP LLM Top 10.

- Targeting for conversational AI applications, including chatbots and AI agents.

- Integrates with CI/CD and developer workflows.

- Real-time feedback to help developers identify and fix issues quickly.

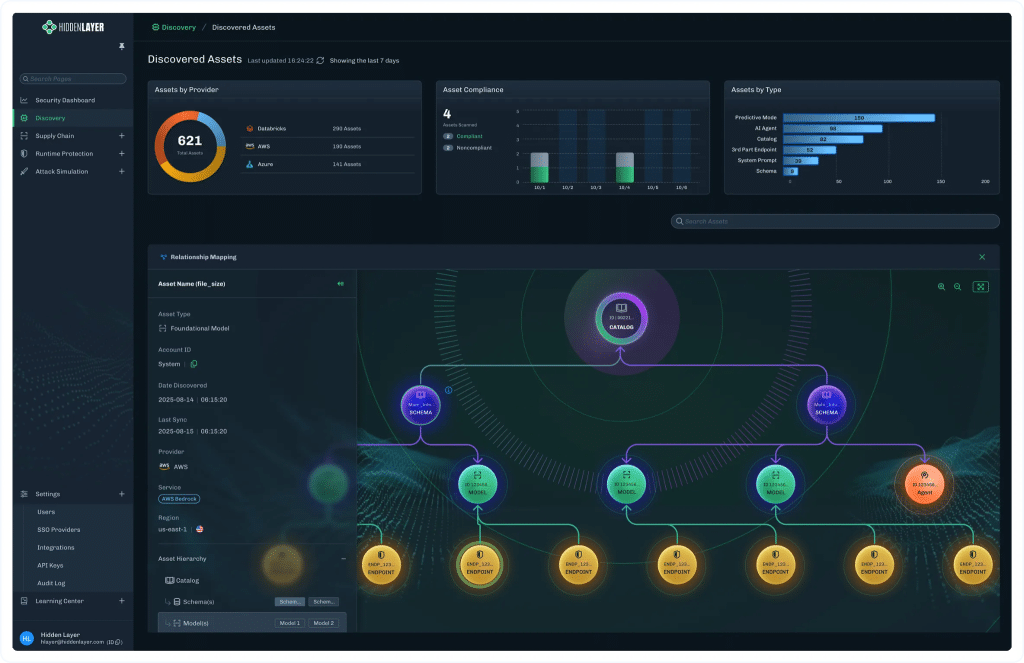

2: HiddenLayer (AutoRTAI)

HiddenLayer’s AutoRTAI is a behavioral testing platform that deploys attacker agents to explore how AI systems HiddenLayer’s Automated Red Teaming solution helps security teams assess vulnerabilities in AI systems through simulated adversarial attacks. It focuses on testing generative AI deployments before release, using automated techniques to perform consistent and repeatable security checks. The platform integrates into broader AI security workflows, allowing teams to evaluate risks with minimal overhead while supporting compliance and structured validation.

Key features include:

- Automated adversarial testing: Simulates expert-level attacks to identify vulnerabilities in AI systems before deployment

- Pre-launch security validation: Integrates into testing workflows to assess risk prior to production release

- Consistent baseline testing: Handles routine security checks to ensure repeatable and standardized coverage

- Platform integration: Part of a broader AI security platform that includes model scanning and detection capabilities

- Compliance-ready reporting: Generates documentation aligned with regulatory and risk management requirements

Source: HiddenLayer

3: Protect AI (RECON)

Protect AI’s RECON is a red teaming platform to systematically test AI applications across a range of threat scenarios. It combines automated attack execution with contextual understanding of how AI systems are built and deployed, allowing teams to identify vulnerabilities across models, prompts, pipelines, and integrations.

Key features include:

- Extensive attack library: Includes hundreds of attack techniques across multiple threat categories, continuously updated for new risks

- Application-aware testing: Generates attacks based on system context such as prompts, guardrails, and pipelines

- Custom attack support: Allows teams to upload and run tailored attack scenarios specific to their environment

- Collaborative red teaming: Enables human testers to guide and refine attacks using natural language inputs

- Standards-based reporting: Maps findings to frameworks like OWASP Top 10 for LLMs and exports results for analysis

Source: Protect AI

4: Mindgard (DAST-AI)

Mindgard provides an AI security platform that applies attacker-style testing to deployed AI systems, focusing on how they behave in real-world conditions. It combines attack surface mapping with continuous red teaming to identify how models, agents, and connected systems can be exploited. The platform emphasizes visibility into AI environments and links testing to runtime protection and enforcement.

Key features include:

- Attack surface mapping: Identifies AI assets, interactions, and exposure points across models and infrastructure

- Continuous red teaming: Runs automated, attacker-aligned tests to uncover exploitable behaviors over time

- Behavioral risk analysis: Evaluates how AI systems respond to adversarial inputs and misuse scenarios

- Runtime defense integration: Connects testing insights with detection and response controls in production

- CI/CD integration: Fits into development pipelines to validate security throughout the software lifecycle

Source: Mindgard

5: Adversa.AI

Adversa.AI provides a red teaming platform focused on identifying vulnerabilities in large language models through continuous testing and threat modeling. It combines automated attack simulation with structured risk analysis to evaluate how AI systems can be manipulated, leak data, or bypass safeguards.

Key features include:

- Threat modeling for LLMs: Profiles risks based on application context and usage scenarios

- Continuous vulnerability audits: Tests against known weaknesses, including OWASP LLM Top 10 issues

- AI-driven attack simulation: Runs automated attacks to uncover novel and environment-specific vulnerabilities

- Coverage of common attack classes: Includes prompt injection, data leakage, jailbreaks, and adversarial inputs

- Combined human and automated testing: Blends tooling with expert-driven analysis for deeper coverage

Source: Adversa

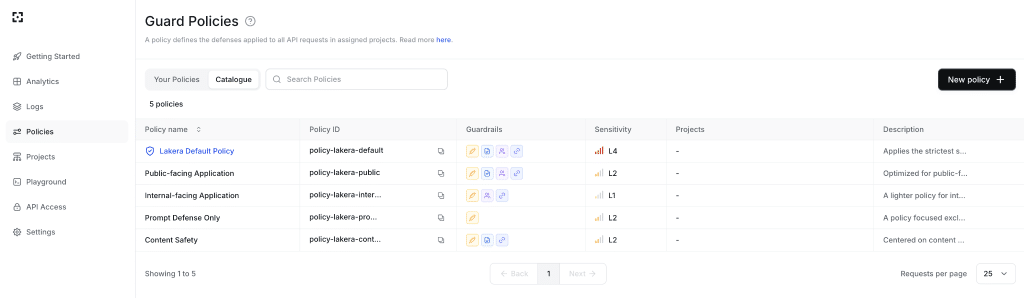

6. Lakera Red

Lakera Red is an AI-native red teaming solution focused on identifying security and safety risks in generative AI systems and translating them into actionable remediation steps. It combines automated attack techniques with risk-based prioritization to help teams understand which vulnerabilities matter most. The platform also emphasizes collaboration between security, product, and engineering teams to improve AI system resilience.

Key features include:

- Risk-based prioritization: Ranks vulnerabilities based on impact and exposure to guide remediation efforts

- Actionable remediation guidance: Provides concrete steps for fixing identified issues across teams

- Automated attack simulation: Tests AI systems for failure modes that traditional testing may miss

- Broad attack coverage: Includes direct manipulation, indirect prompt injection, and infrastructure-level risks

- Threat intelligence integration: Leverages a large community-driven dataset of AI attack techniques

Source: Lakera

Leading AI red teaming providers: Services

Software can go a long way, but there are still places where human-led testing is essential. The following providers specialize in red teaming as a service, bringing deep technical skill and scenario-driven testing to AI deployments. For a more detailed look at these offerings, see our comparison of AI red teaming services.

These are the firms to look at when you need:

- Creative chaining of attack techniques

- Custom assessments of internal models

- External validation of safety controls and guardrails

- Strategic reporting for leadership or regulatory teams

CrowdStrike

CrowdStrike offers AI red teaming services that simulate real-world adversarial behavior against AI systems and integrations. Its approach focuses on emulating attacker tactics to uncover vulnerabilities that could lead to data exposure, system manipulation, or operational disruption. The service combines penetration testing, adversary emulation, and red/blue team exercises tailored to each organization’s AI environment.

Key features include:

- Data protection focus: Helps uncover risks related to sensitive data exposure and unauthorized access

- Adversary emulation: Simulates real-world attack scenarios tailored to specific AI use cases

- LLM-focused penetration testing: Evaluates AI applications against known vulnerability classes such as OWASP Top 10

- Red team / blue team exercises: Tests both offensive and defensive capabilities to improve detection and response

- Integration risk assessment: Identifies weaknesses in AI integrations that could impact system integrity

NRI Secure

NRI Secure provides AI red teaming as part of its broader security consulting and managed security services. Its approach centers on customized assessments and deep analysis of an organization’s security posture, including AI systems and supporting infrastructure. The company emphasizes structured testing, reporting, and alignment with industry standards and compliance requirements.

Key features include:

- Experienced security practitioners: Uses certified experts with backgrounds in offensive security and risk assessment s.

- Customized AI red teaming services: Tailors assessments to specific environments, use cases, and risk profiles

- Integration with broader security services: Combines red teaming with monitoring, consulting, and risk management

- Detailed reporting and analysis: Provides both high-level insights and in-depth technical findings

- Compliance alignment: Supports frameworks such as PCI DSS, HIPAA, and other global standards

Reply

Reply offers AI red teaming services focused on identifying and mitigating risks in generative AI and machine learning systems. Its methodology combines threat modeling, attack simulation, and continuous monitoring to evaluate how AI systems can be misused or manipulated. The approach also incorporates governance and regulatory considerations, particularly in environments subject to emerging AI regulations.

Key features include:

- End-to-end support: Covers both identification of issues and guidance on remediation strategies

- Structured red teaming methodology: Includes threat modeling, attack execution, mitigation, and ongoing monitoring

- Generative AI risk assessment: Evaluates vulnerabilities related to model manipulation and data usage

- Regulatory alignment: Supports compliance with frameworks such as the EU AI Act

- Security and governance integration: Connects technical testing with organizational risk management practices

Synack

Synack delivers AI red teaming through a hybrid model that combines a vetted global network of security researchers with AI-supported testing. Its platform enables continuous and scalable penetration testing, helping organizations uncover vulnerabilities that automated tools alone may miss. The approach focuses on reducing noise while maintaining depth in vulnerability discovery.

Key features include:

- Scalable testing model: Supports rapid deployment and broad coverage across applications and systems

- Crowdsourced expert testing: Leverages a global network of vetted researchers to identify diverse vulnerabilities

- Hybrid human and AI approach: Combines automation with human intuition for deeper analysis

- Continuous penetration testing: Enables ongoing assessments rather than one-time engagements

- Noise reduction and validation: Filters findings to deliver high-confidence, actionable vulnerabilities

How to choose the right AI red teaming provider

When choosing a red teaming provider, brand recognition matters less than finding a tool or partner that fits your actual use case, resourcing level, and risk tolerance. Start by asking a few key questions:

- Are you testing code, models, or both?

- Do you need repeatable, automated validation—or bespoke, context-aware testing?

- Are you working with in-house models or vendor APIs?

- Who will consume the findings—developers, security leads, compliance officers?

- What are your compliance or audit requirements?

Here’s a quick cheat sheet to help you narrow things down:

| Use Case | Best-fit Tools |

|---|---|

| LLM prompt testing | AutoRTAI, Garak, PyRIT |

| Code security after LLM generation | Mend.io |

| Fine-tuned model robustness | Mindgard, Foolbox |

| Regulatory + risk reporting | Mend.io, Protect AI RECON |

| DIY/internal red teaming program | PyRIT, Foolbox, Garak |

If you’re early in your AI journey and need to map the risk landscape, start with asset discovery and pipeline visibility: tools like RECON are made for that. If you’re developing LLM-based products, a combination of behavioral red teaming and code validation is likely to serve you best. And if you’re running into risks you can’t yet name or scope, working with a service-based partner may help clarify next steps.

Why Mend.io deserves a seat at the table

Most red teaming tools focus on detecting issues. Mend.io works where those issues actually land: in code.

Red teaming uncovers risks. Mend.io helps stop them from shipping.

- Scans AI-generated code and configurations for vulnerabilities, insecure patterns, and dependency risks

- Provides real-time feedback directly in developer workflows

- Integrates with CI/CD for continuous coverage

- Complements red teaming by helping security and engineering teams close the loop on remediation

For organizations adopting LLMs in software development, not just model creation, Mend.io plays a critical role in securing what actually gets built.

Final thoughts

The field of AI red teaming is evolving fast, just like the systems it aims to secure. Choosing the right provider depends on matching the scope and shape of your risks to a provider that knows how to find cracks before they turn into breaches.

Whether you’re scaling LLM development, running sensitive workloads, or preparing for regulatory scrutiny, a strong red teaming strategy gives you an edge. The providers in this guide offer a starting point. The next step is deciding what you need to test … and how far you’re willing to go to find out what breaks